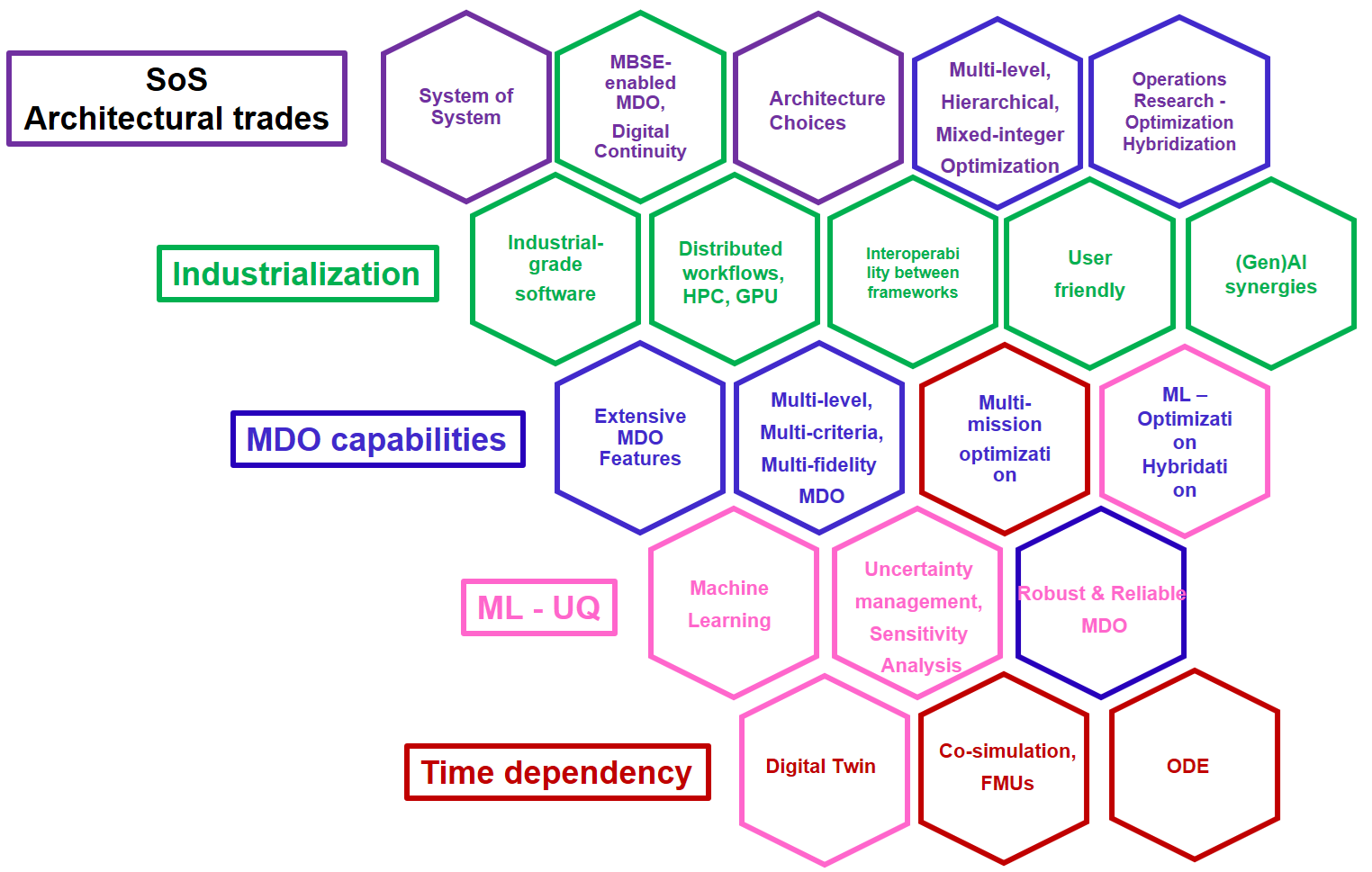

Future directions¶

This page outlines the topics the development team is currently working on, as well as those they would like to tackle. Feel free to contact them for more information. This page can also give you ideas on how to contribute to the software.

Benchmarking¶

Our roadmap aims at enriching the list of existing benchmark MDO problems with new ones and further assess comparative advantages of the MDO formulations. We are happy to share these elements with the MDO community and are open to collaborate on the creation of benchmark MDO problems. The gemseo-benchmark plugin allows comparing optimizers, MDO formulations, and MDAs, and could be further developed and extended to other algorithms.

GEMSEO as a service and GUIs¶

GEMSEO processes can be executed as RESTful web services using the gemseo-http plugin. We are convinced that this concept should be pushed further to configurable MDO processes that can be published, and used to seamlessly build custom Graphical User Interfaces (GUIs) without knowledge in front-end development.

We would also like to improve the gemseo-streamlit plugin to facilitate the creation of streamlit-based GUIs to make certain features of GEMSEO more user-friendly.

Interoperability¶

We are convinced that interoperability between platforms and methodologies is key.

One objective is to interoperate GEMSEO with OpenMDAO.

The AIAA MDO Technical committee is supporting the Philote standard for Disciplines, a gemseo-philote plugin is under development.

This would also allow C++, Rust, and Julia disciplines to be interfaced with GEMSEO through Philote, that supports these languages.

The gemseo-fmu plugin enables the integration, simulation and co-simulation of FMU models in a multidisciplinary context. This enables GEMSEO to connect to many tools that export dynamic simulation models as FMUs. We could improve the API to provide access to more features offered by the FMI standard.

Machine learning¶

- Surrogate models

- Objective: facilitate the construction of surrogate models by automating steps and explaining the result.

- Details: calibration, selection, visualization, global and local quality measures, benchmark, etc.

- Regression models

- Objective: improve existing models to address a wider range of problems

- Details: gradient-enhanced, physics-informed, functional variables, etc.

- Active learning

- Objective: continue integrating state-of-the-art AL libraries and adjust the algorithms as needed, in particular to take into account multidisciplinary or multi-fidelity aspects.

- Details: optimization, exploration, quantile estimation, etc.

- Deep learning

- Objective: develop generic wrappers for deep learning libraries.

- Details: TensorFlow, PyTorch, etc.

MBSE-MDO digital continuity¶

Digital continuity from system engineering to multi-disciplinary optimization is key to scale these methods across teams and organizations, thus enforcing consistency and traceability of the data and simulation artifacts.

Such capabilities, enabling a digital thread from the product and process models and requirements

to executable MDO processes, are currently developed in the frame of several projects.

More specifically, connectors to various MBSE technologies,

either text or object-based, such as Capella, textual SysML V2, and Cameo Teamwork Cloud,

are under active development, and with the gemseo-sysmlv2 plugin to be soon open-sourced.

We welcome contributions on that topic, with an equal interest on the methods and tools and on the use cases.

MDO formulations¶

The contributors are welcome to make use of GEMSEO concepts flexibility and develop new MDO formulations. The multi-level formulations family seems in particular very promising and the GEMSEO team would be happy to support an active collaborative community on the subject.

- Bilevel-BCD

- Objective: improve the Bilevel-BCD formulation.

- Details: algorithmic improvements, validation on benchmark cases.

MPI¶

A plugin of GEMSEO has been developed to enable running GEMSEO MDAO processes on HPC architectures, using the MPI standard. It allows to execute (and linearize) disciplines on their own MPI communicator, while GEMSEO handles the communications between disciplines (for instance in MDAs, MDOScenarios, Chains, etc.). The plugin integrates efficient direct and adjoint methods to compute derivatives (linear solvers from PETSc and matrix-free preconditioners), fixed-point acceleration methods and interface to all the serial optimization algorithms of GEMSEO. We are open to collaborate on real applications using these features, especially high-fidelity HPC ones.

Multi-fidelity MDO processes¶

They allow the use of multiple levels of precision for a given discipline, in order to accelerate the computational time. Basic refinement strategies will be distributed, together with switch criteria between the levels. The gemseo-multi-fidelity plugin provides the core features. We aim to develop its features, among which:

- Combination with multi-level and multi-objective optimization

- Objective: reduce the runtime of multi-objective and multi-level optimization.

- Details: Pareto fronts computation using various algorithms, combined Pareto fronts at different levels.

- Automatic switch criteria

- Objective: integrate gradient-based and error-based validation criteria.

- Further develop UQ - multi-fi synergies

- Objective: reduce UMDO computational costs.

- Details: UQ offers a range of opportunities to generate levels of fidelity that combine well with the gemseo-multi-fidelity plugin. The combination of the methodologies remains to be explored.

- EGO and Data fusion approaches

- Objective: offer new types of algorithms to perform multi-fidelity MDO

- Details: the current approach is based on filtering and refinement (sequential execution of optimization scenarios from low to high fidelity). Other approaches, based on surrogate models, allow to fuse multiple data sources into a single model.

Optimisation algorithms¶

Any new algorithm of interest for a class of applications is of interest for the GEMSEO ecosystem. Some surrogate-based optimization capabilities are under development such as active learning.

- EGObox integration

- Objective: develop the gemseo-egobox plugin to support EGObox, the surrogate-based optimization library from ONERA.

- UNO

- Objective: interface the UNO optimization library.

- Details: UNO is an open source generic framework that implements interior point, SQP and

- Architectural and hierarchical optimization

- Objective: provide methods to support architectural choices and hierarchical design spaces.

- Details: hierarchical design spaces description, adapted design of experiments and optimizers, multi-level hierarchical optimization.

- Operations research (OR) hybridization

- Objective: provide hybrid MDO and OR methods for large scale mixed integer optimization.

- Details: embed external OR models in MDO workflows, solve MILP problems generated from MDO processes.

- Deep learning hybridization

- Objective: accelerate optimizers using deep learning.

Time-dependent MDO¶

The gemseo-petsc plugin incorporates ODE solvers with adjoint capabilities, based on PETSc TSAdjoint. This opens the door to new applications and methods.

Uncertainty quantification and management (UQ&M)¶

- Dependent variables

- Objective: take into account the stochastic dependence of the variables to improve representativeness.

- Details: copulas, dedicated visualizations, etc.

- Sensitivity analysis

- Objective: improve existing sensitivity analysis techniques, whether in terms of API, documentation, or computational efficiency.

- Mixed-type UQ&M problems

- Objective: extend the UQ&M features in order to support random variables other than continuous ones, e.g. discrete variables (including categorical) and random processes, whether for sources of uncertainty or for variables of interest.

- Details: uncertainty quantification, optimization under uncertainty, sensitivity analysis, etc.

- Optimization under uncertainty

- Objective: improve existing optimization algorithms under uncertainty, whether in terms of API, documentation, or computational efficiency.

- Reliability

- Objective: extend UQ&M techniques to the specific aspects of reliability, incorporating estimation of probabilities and quantiles.

- Details: reliability analysis (RA), reliability-based design optimization (RBDO), reliability-oriented sensitivity analysis (ROSA), etc.

- Multi-fidelity

- Objective: improve the accuracy of statistical estimators using multi-fidelity techniques relying on lower-fidelity models, such as variance reduction approaches.

- Details: control variates, surrogate models, multi-scale, etc.